A Practical Mental Model for AI in Lending: From Document Intelligence to Agentic Execution

AI has become table stakes in lending software. Today, nearly every platform markets itself as “AI-enabled,” often using identical language to describe vastly different capabilities.

For executive teams, the critical question is no longer whether a platform has AI, but what specific responsibilities that AI is capable of handling. While two vendors may both claim to use AI, their outcomes can be worlds apart: one might simply accelerate document sorting, while another actively drives the loan through the fulfillment process.

Much of the current confusion stems from treating AI as a “feature” rather than a responsibility embedded in the workflow. Vague terms like “AI-powered” or “intelligent automation” reveal very little about how work actually gets done when volume spikes or risk increases.

A more effective way to evaluate AI is to focus on accountability: is the system organizing information, helping people understand it, or taking responsibility for executing the work? This mental model makes these differences visible and clarifies where real operational impact occurs.

AI as Responsibility, Not Technology

When you view AI through the lens of responsibility, consistent patterns emerge. Regardless of the underlying technology, AI in lending typically addresses one of three operational needs:

- Sense-making: Organizing unstructured inputs.

- Interpretation: Helping people understand and reason with data.

- Execution: Carrying work forward within a defined process.

These uses form three distinct layers of applied intelligence. Each layer removes a different kind of friction, and each depends on the layer beneath it. At the same time, they are not interchangeable. Systems designed to organize information do not resolve execution bottlenecks, and systems designed to explain data do not, by themselves, move work to completion.

This layered view provides a clearer foundation for understanding how AI shows up in lending operations, and why different approaches lead to very different outcomes.

Layer 1: Document / Input Intelligence

AI as a fast, consistent reader

Lending workflows are dominated by unstructured inputs. Inspections, invoices, budgets, lien waivers, forms, and reports arrive in different formats and require time-consuming manual handling before any review or decision can happen.

At the first layer, AI is used to make sense of this raw material. Its role is not to judge or decide, but to reliably convert unstructured inputs into information the system can work with.

At this layer, AI is responsible for:

- Ingesting unstructured documents

- Extracting relevant data elements

- Normalizing information into structured formats

- Identifying mismatches, gaps, or notable conditions

The value of this capability is primarily administrative. By reducing the effort required to locate, sort, and re-enter information, teams spend less time handling inputs and more time reviewing outcomes.

Operationally, this shows up as:

- Less manual re-keying of data

- Fewer transcription and consistency errors

- Faster preparation of files for human review

It’s important to be precise about what this layer does not do. Document and input intelligence does not approve requests, make policy judgments, or move work forward on its own. It prepares information so humans, or more advanced AI layers, can act efficiently and consistently.

Reducing this kind of administrative effort has a meaningful impact on scale, but it is only the foundation. Higher-order efficiency comes from how that structured information is understood and applied.

Layer 2: Conversational Intelligence

AI as a smart analyst

Once information has been structured, the next bottleneck in lending operations is no longer access to data, but understanding it. Teams spend significant time navigating dashboards, running reports, and exporting data just to answer routine questions about status, risk, or performance.

Conversational intelligence addresses this gap by making structured data easier to explore and interpret. Rather than requiring users to build queries or move between tools, AI supports analysis through natural interaction.

At this layer, AI enables users to:

- Ask questions in natural language

- Summarize trends, patterns, or anomalies

- Compare loans, projects, or portfolios

- Generate narrative explanations or tables without building reports

The value of this layer is primarily cognitive. It reduces the effort required to turn data into understanding, especially for non-technical users who don’t live inside reporting tools.

Operationally, this shows up as:

- Less time navigating dashboards and reports

- Fewer ad hoc exports and one-off analyses

- Faster movement from insight to informed action

It’s important to be clear about the limits of this capability. Conversational intelligence can explain what’s happening and help users reason about data, but it still stops short of execution. Insight alone does not resolve operational bottlenecks when volume increases.

This layer improves how teams understand their work. Removing operational constraints requires intelligence that can apply that understanding to move work forward.

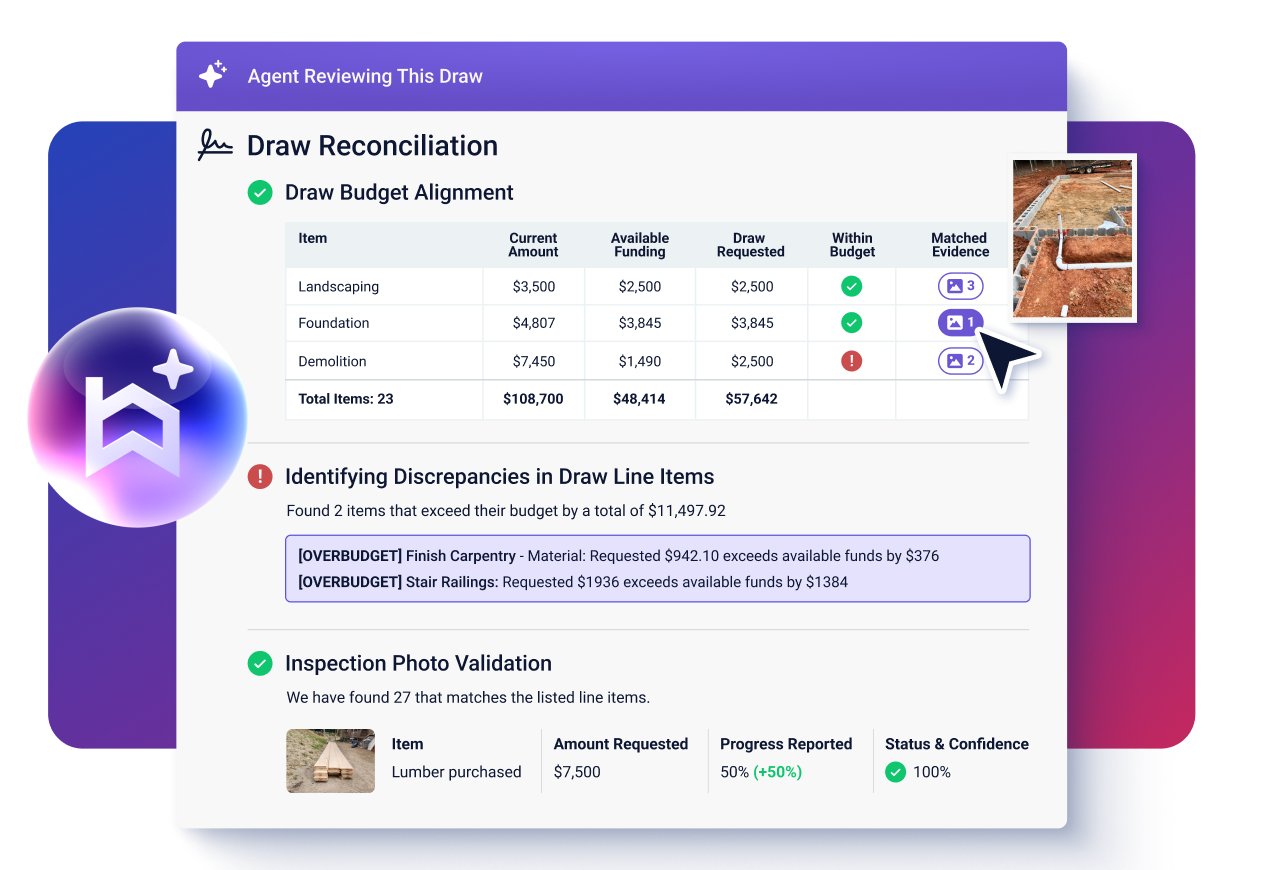

Layer 3: Agentic Intelligence

AI as a task assistant

Agentic intelligence represents a shift from insight to action. Instead of stopping at analysis or recommendation, AI systems at this layer participate directly in operational work.

At this level, AI is responsible for:

- Evaluating situations using structured data and policy logic

- Planning appropriate next steps

- Executing defined tasks inside real workflows

This changes the nature of efficiency. Rather than simply reducing the time spent reviewing information, agentic intelligence removes operational effort by taking ownership of repeatable, policy-bound work.

Crucially, this does not mean removing human oversight. Agentic systems are designed to operate within clear governance boundaries. They follow lender-defined rules, produce evidence-backed outputs, and escalate exceptions when conditions fall outside approved thresholds.

In practice, autonomy exists on a spectrum:

- Review-only, where the system evaluates and summarizes findings for human decision-making

- Assist, where it completes defined tasks while keeping humans in the loop

- Automate, where low-risk actions are carried through end-to-end, with escalation reserved for exceptions

The underlying intelligence remains the same. What changes is how much responsibility is delegated.

This is the layer where AI begins to affect throughput, not just productivity. By carrying work forward rather than waiting for human coordination at every step, agentic intelligence enables lending operations to scale without adding proportional manual effort.

How The Layers Work As a System

The real power of AI is found in the compound effect of these layers. When they work in sequence, they eliminate three distinct sources of drag:

- Administrative drag: Layer 1 removes the “manual labor” of data intake.

- Cognitive drag: Layer 2 removes the “analytical labor” of data interpretation.

- Operational drag: Layer 3 removes the “coordination labor” of moving work forward.

Together, they create a step-change in scalability. As volume increases, the combined effect becomes more pronounced: fewer handoffs, fewer delays, and significantly less human effort required to manage the same workload.

How Executives Can Use This Model to Evaluate AI Claims

Once AI is viewed through the lens of responsibility, it becomes easier to evaluate platform claims with more precision. Instead of asking whether a system is “AI-powered,” leaders can focus on what the AI is actually accountable for inside the operation.

When a platform claims to use AI, a few questions quickly clarify its role:

- What inputs does the system understand automatically, without manual preparation?

- Can users reason about data directly, without building reports or exporting information?

- Does the system execute work, or does it stop at recommendations and alerts?

In practice, many platforms deliver strong capabilities at the first or second layer and still market those features as automation. While those layers provide meaningful efficiency gains, they do not fundamentally change how work moves through the organization.

True operational leverage appears when responsibility shifts — when systems are designed not just to accelerate tasks, but to carry defined work forward under policy and governance. This distinction helps executives move beyond surface-level claims and assess how AI will actually affect capacity, risk, and scale as volume grows.

Why Agentic AI Builds on – Not Replaces — the Other Layers

Execution-focused AI is often discussed as a leap forward, but in practice it only works when the underlying foundations are sound. Systems that act without reliable inputs or clear context tend to amplify errors rather than eliminate them.

Agentic intelligence depends on two prerequisites. First, inputs must be structured and consistent so decisions are grounded in accurate information. Second, context must be clear enough for the system to interpret what matters and why. Without those conditions, execution becomes brittle, difficult to govern, and risky to scale.

This is why agentic AI is harder to build — and harder to deploy safely — than tools that stop at extraction or analysis. Carrying work forward requires intelligence and confidence in the information and reasoning that precede action.

Platforms that clearly define AI responsibility across layers tend to produce more durable outcomes. Instead of overextending a single capability, they treat execution as the final step in a system designed for reliability, transparency, and control.

In practice, Built applies all three layers in production, with its AI Draw Agent representing the first agentic implementation in a broader roadmap designed around this layered approach.

Moving Beyond “AI as a Feature”

AI is no longer a differentiator by itself. As intelligence becomes commonplace across lending platforms, value is defined less by whether AI exists and more by where it is applied and what work it takes on.

A layered view helps leaders cut through broad claims and focus on operational reality. It makes it easier to compare platforms clearly, set realistic expectations for impact, and understand how intelligence actually changes the flow of work as volume and complexity increase.

Most importantly, this perspective shifts the conversation from speed alone to responsibility. Organizing information, explaining it, and executing against it are fundamentally different capabilities, with very different implications for scale, risk, and control.

The future of lending operations belongs to systems designed to read, reason, and act, with accountability built in. Leaders who evaluate AI through that lens are better positioned to invest in capabilities that don’t just accelerate tasks, but meaningfully change how work gets done.

Andy brings years of experience partnering with community and regional lenders to optimize their CRE construction lending programs. Leveraging a background in enablement, communications, and strategic leadership, he’s worked with hundreds of institutions to test workflows, train teams, and strengthen the controls that support safe, efficient draw administration. At Built, Andy focuses on making construction lending processes clearer and more consistent, ensuring lenders can move faster while maintaining confidence in every project.