Don’t Let AI Contract Language Be the Reason You Stop Innovating

Adoption of AI in real estate finance doesn’t stall in the product demo or because the AI functionality is weak. It fails during the contract negotiation.

The real problem is that no one can agree on what the contract should say. The vendor’s standard agreement doesn’t answer the questions that matter most, and the lender’s terms don’t address the nuances of a particular vendor’s technology. I’ve watched this pattern repeat across institutions of every size.

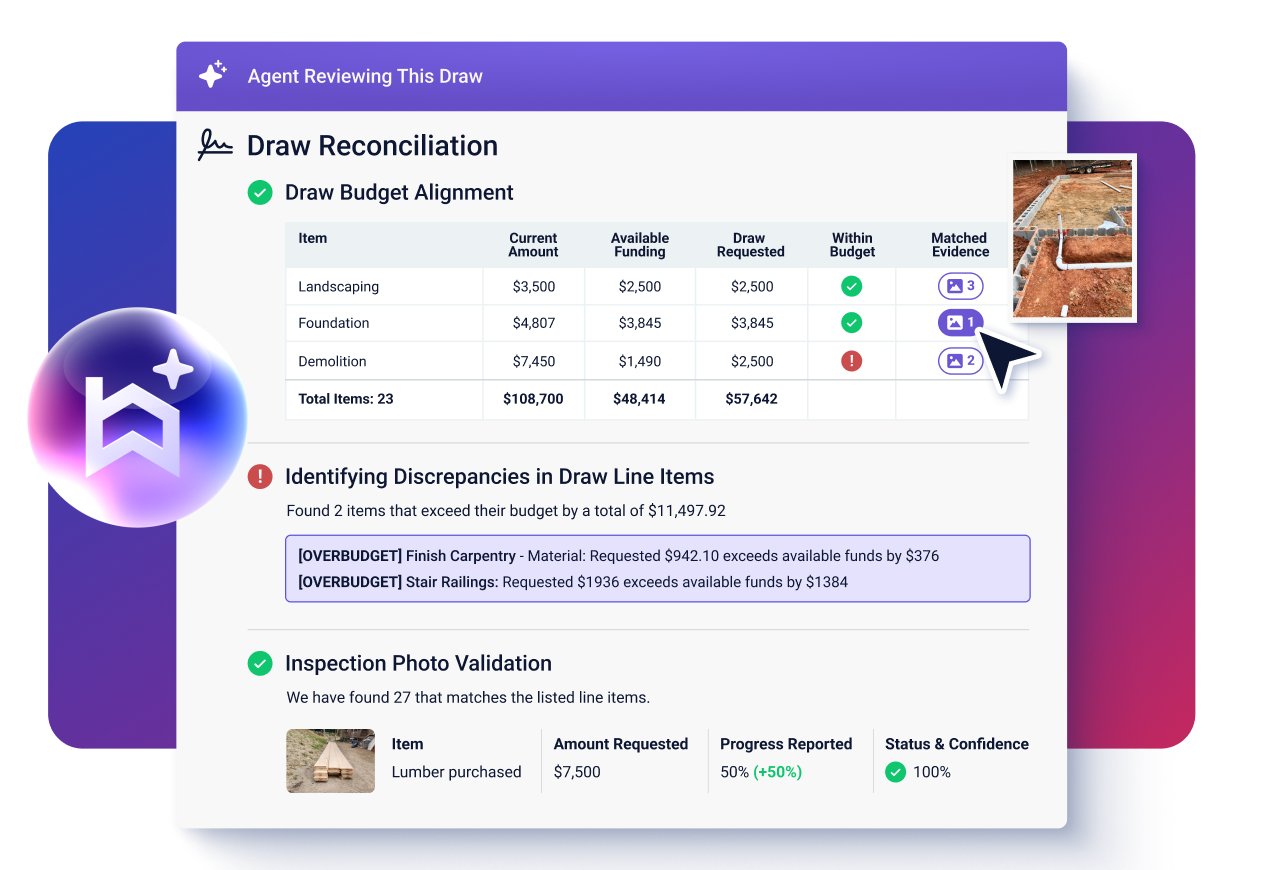

AI is already here, and the best vendor partnerships are already embedding AI into their products. Built’s AI Agent handles draw reviews, inspection coordination, and portfolio monitoring. These features are live and actively helping lenders serve their borrowers.

The question is whether your agreement makes clear who reviews the output, who takes on the risk, and what the system is actually allowed to do.

“Best practices” for AI governance are still forming. Regulatory frameworks are evolving, with state and federal agencies actively developing rules for use of AI in financial services. But the contract questions that matter most are already clear, and they’re the same ones coming up in every procurement conversation I’m part of.

Key Takeaways

- AI stalls during the procurement stage: contract language is the most common barrier to AI adoption in real estate finance.

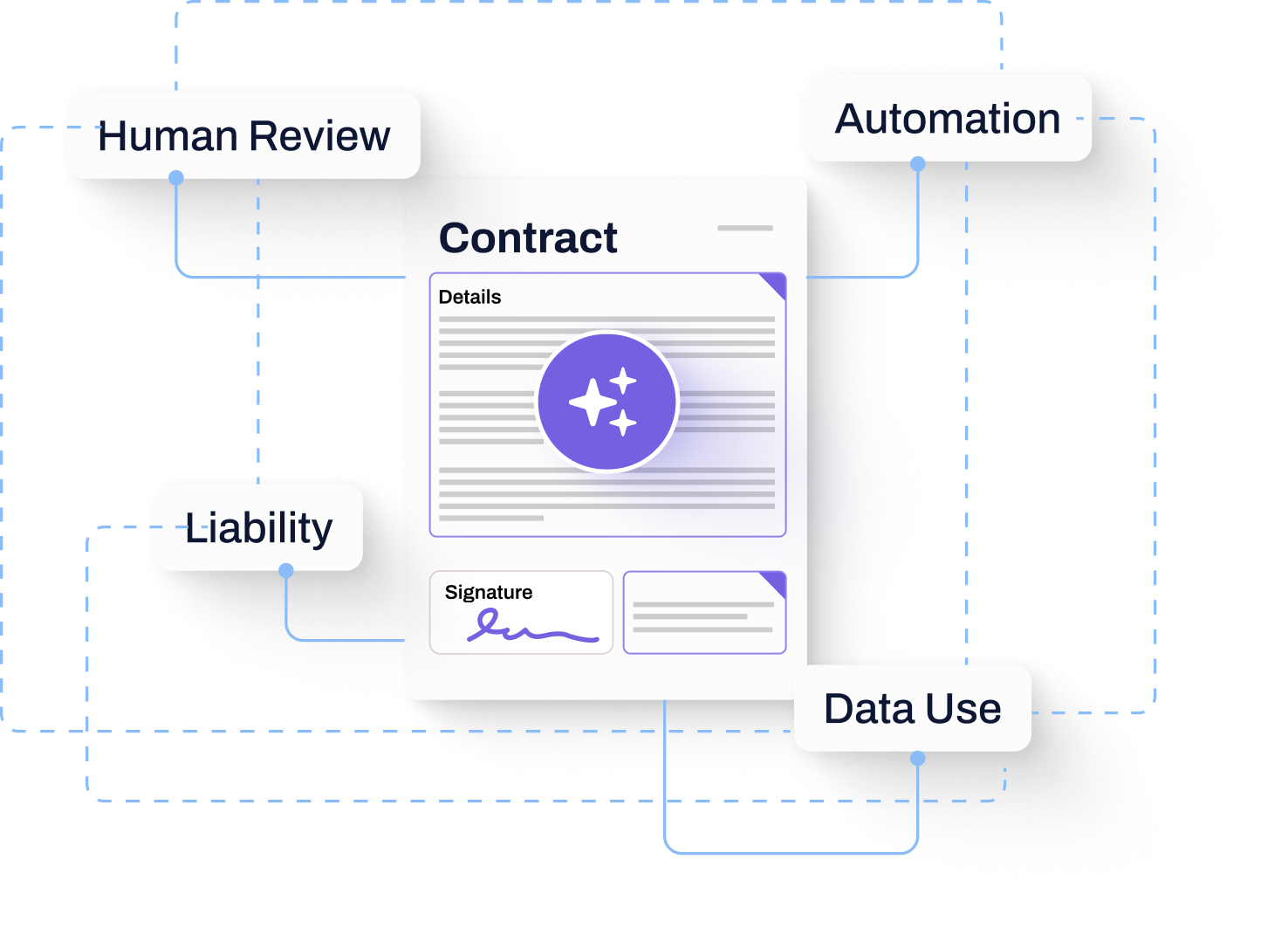

- Four areas of risk matter most to lenders: human review of AI outputs, ability to opt out of automation, restrictions around use of their data in vendors’ AI models, and determination of who bears responsibility for the actions and outputs of AI in the vendor’s services. Each area requires distinct contract language.

- Early frameworks win: institutions that align on AI contract language now will move faster and with greater confidence than those waiting for regulatory consensus.

Four Contract Clauses That Determine AI Risk in Real Estate Finance

Every AI exhibit I’ve reviewed and every one we’ve worked through with lenders and asset managers comes down to four areas:

- Human review

- Optional automation

- Data use and model training

- Liability and warranty treatment

Get these four right and you have a functional framework. Leave any one of them vague and you’ve created a gap. It will surface at the worst possible moment, such as during a draw dispute, an audit, or a regulatory exam.

These aren’t abstract governance concepts. In construction lending, a draw disbursement is a real capital event. At Built, more than $317 billion in real estate dollars move through the platform.

Over 300 lenders operate across more than 569,000 active projects. The downstream consequences of ambiguous AI terms affect real funding decisions on live deals.

Human Review Requirements Must Be Explicit, Not Assumed

The most common gap in AI contracts is the assumption that human review is happening somewhere. It’s not always. And if the contract doesn’t specify it, neither party has clear accountability when it doesn’t.

There is a meaningful operational distinction between AI that assists a user and AI that executes a decision. AI that surfaces a risk flag in a draw review is assisting. AI that routes, approves, or advances a workflow step without a human touchpoint is executing.

Both have legitimate uses, but they shouldn’t be governed by the same vague clause.

Here’s what the contract needs to say explicitly:

- Whether AI outputs require human review before action is taken

- Whether the vendor provides a human-in-the-loop process by default, or whether the client is responsible for configuring one

- Who bears accountability when that review doesn’t happen

The answer to that last question is almost always the client. That’s appropriate, but it needs to be stated, not implied.

When accountability is implied, you get disputes. When it’s stated, you get clarity, and you can build internal procedures around it.

How Optional Automation, Data Use, and Liability Each Require Separate Contract Treatment

Optional automation, data use, and liability each carry different risks and require unique treatment under the contract.

1. Optional automation should stay optional

Optional automation should be exactly that. It should be customer-enabled and customer-configured. The client should be responsible for the following:

- Providing configuration parameters that match their internal approval standards

- Testing the automation before it runs on live workflows

- Maintaining exception handling and monitoring procedures

That work sits with the client. The contract should make clear that the client has the controls to do it.

2. Data use and model training need an explicit, written answer

Data use and model training is where the most anxiety surfaces in procurement conversations, and it’s warranted. Clients need to know whether their data, including loan files, draw histories, and borrower information, is being used to train or fine-tune the vendor’s models. The answer should be explicit and unambiguous.

At Built, the position is straightforward: client-specific data isn’t used to train or fine-tune Built’s models. Your vendor should commit to that in writing, not just in a sales conversation. Built’s AI Draw Agent reflects this commitment in practice.

3. Liability and warranty language needs to be balanced

Liability and warranty language needs to acknowledge the nature of AI outputs without eliminating vendor accountability entirely. AI outputs are probabilistic and can contain inaccuracies.

A contract that pretends otherwise is setting you up for a dispute.

Disclaiming all liability while the vendor retains the right to modify AI functionality at will isn’t a balanced position either. The vendor should be obligated to do the following:

- Operate lawfully

- Maintain reasonable safeguards

- Not materially degrade AI security through updates

That’s a floor for vendor obligations.

What a Workable AI Exhibit Covers, and What It’s Designed to Accomplish

The goal of an AI exhibit is to create a single source of truth for how AI is used, governed, and reviewed.

When a question comes up, internally or from an auditor/examiner, the answer should be in the document.

Consider a mid-sized regional lender managing a portfolio of 200 active construction loans. During a routine audit, the examiner asks how AI-assisted draw reviews are supervised and who is accountable if an automated flag is missed.

If the lender’s vendor agreement contains a clear AI exhibit, that question has a documented answer. If it doesn’t, the lender is left reconstructing accountability from email threads and internal documents. That process delays the audit and creates regulatory exposure.

A workable AI exhibit should cover the following, at a minimum:

- How AI functionality is defined and what modifications the vendor can make without triggering a material change

- Whether AI outputs require human review and who is responsible for configuring that requirement

- How optional automation is enabled, configured, and supervised by the client

- Whether client data is used to train or fine-tune models

- How liability is allocated for decisions made based on AI outputs

- What safeguards the vendor maintains and what happens if AI functionality is suspended

Getting these terms in writing with clear, plain language is what enables institutions to adopt AI with confidence. Some institutions spend six months reviewing an AI exhibit and still aren’t sure what they agreed to.

To see how Built approaches AI governance in practice, across construction loan administration, draws, inspections, and portfolio workflows, book a demo today. You can also explore how agentic AI is reshaping real estate lending on the Built blog. Built works through these questions with lenders and asset managers every day, and the framework is available to share.

The institutions that will move fastest on AI adoption are the ones who got the contract language right first.

Scott Thissen is VP of Enterprise Sales at Built Technologies, where he leads go-to-market strategy and partnerships with top-tier financial institutions modernizing their construction and real estate lending operations.

Scott brings a unique perspective to lending technology: he combines deep sales leadership experience with hands-on expertise in how AI is reshaping customer conversations and deal dynamics. He’s spent the past year reimagining how sales teams can authentically engage with lenders on AI-driven transformation; moving beyond vendor pitches to genuine problem-solving around operational efficiency, risk, and scalability.

His work at Built focuses on helping his teams be consultative real estate experts for their clients navigating our new technology landscape, while building solutions that actually solve real lending problems. He’s passionate about creating frameworks that enable teams to sell smarter and build deeper customer relationships in an AI-driven world.

Scott lives in Brentwood, Tennessee, with his wife and three kids, and spends his free time running to kids soccer games and ballet recitals.